Zyphra Releases ZAYA1-8B, a Reasoning Model trained on AMD and Optimized for Maximum Intelligence Density per Parameter

PR Newswire

SAN FRANCISCO, May 6, 2026

ZAYA1-8B delivers reasoning, mathematics, and coding performance competitive with models many times larger, achieving high intelligence density with under one billion active parameters trained on full-stack AMD infrastructure.

SAN FRANCISCO, May 6, 2026 /PRNewswire/ -- Zyphra today announced ZAYA1-8B, a mixture-of-experts (MoE) language model that matches or exceeds substantially larger open-weight models on complex reasoning, mathematics, and coding tasks while using fewer than one billion active parameters. Built on Zyphra's AMD-native training stack and our prior ZAYA1-base release, ZAYA1-8B was trained on custom AMD Instinct™ MI300X clusters with AMD Pensando Pollara networking on IBM Cloud infrastructure.

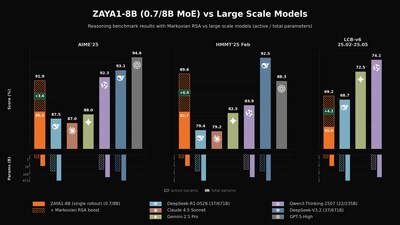

At under one billion active parameters, ZAYA1-8B has an impressive intelligence density per parameter which enables the model to perform competitively with models many times its size across mathematics benchmarks (AIME, HMMT), coding (LiveCodeBench), reasoning, knowledge retrieval (GPQA-Diamond), and instruction following (IFEval, IFBench). ZAYA1-8B matches or exceeds open-weight models such as Nemotron-3-Nano-30B-A3B and Mistral-Small-4-119B while remaining competitive with first-generation frontier, reasoning models including DeepSeek-R1-0528, Gemini-2.5-Pro.

Alongside ZAYA1-8B, Zyphra introduces Markovian RSA, a novel test-time compute methodology that combines parallel trace generation with fixed-length context chunking to enable unbounded reasoning while keeping memory costs constant. With this methodology, ZAYA1-8B approaches or exceeds frontier models such as Claude 4.5 Sonnet, Gemini-2.5-pro and DeepSeek-v3.2 on mathematics benchmarks and surpasses both DeepSeek-V3.2, and GPT-OSS-120B (high) on the challenging APEX-shortlist benchmark under extended compute.

"ZAYA1-8B demonstrates what is possible when architecture, pretraining, and reinforcement learning are co-designed toward a single objective: maximizing the intelligence extracted per parameter and per FLOP," said Krithik Puthalath, Founder and CEO of Zyphra. "This is the foundation of how we think about building efficient, scalable AI systems, and we are excited to continue scaling both model size and the breadth of domains our post-training stack covers."

ZAYA1-8B's performance reflects innovations across the full stack. The model incorporates Zyphra's Compressed Convolutional Attention (CCA), a more efficient attention variant, a novel MLP-based expert router that improves routing stability over standard linear routers, and learned residual scaling, which controls residual-norm growth through depth at negligible parameter and FLOP cost. Its post-training begins with a supervised fine-tuning phase, followed by a four-stage reinforcement learning cascade: a reasoning warmup on math and puzzles, an adaptive RLVE-Gym difficulty curriculum, large-scale math and code RL with test-time compute traces, and a final behavioral RL stage focused on chat quality and instruction following.

ZAYA1-8B was pretrained entirely on AMD hardware using a cluster of 1,024 MI300X GPUs with AMD Pensando Pollara interconnect, building on the AMD infrastructure foundation that also powers Zyphra Cloud.

Availability

ZAYA1-8B is available for free today as a serverless endpoint on Zyphra Cloud at cloud.zyphra.com and with the model weights available on Hugging Face. ZAYA1-8B is released under an Apache 2.0 license. For technical details on architecture, pretraining, and post-training methodology, see the technical report.

About Zyphra

Zyphra is an open superintelligence research and product company based in San Francisco, CA, on a mission to build human-aligned AI that helps individuals and organizations reach their fullest potential. For more information visit www.zyphra.com or contact press@zyphra.com.

Media Contact:

Paul White

Chief Business Officer

press@zyphra.com

www.zyphra.com

![]() View original content to download multimedia:https://www.prnewswire.com/news-releases/zyphra-releases-zaya1-8b-a-reasoning-model-trained-on-amd-and-optimized-for-maximum-intelligence-density-per-parameter-302764700.html

View original content to download multimedia:https://www.prnewswire.com/news-releases/zyphra-releases-zaya1-8b-a-reasoning-model-trained-on-amd-and-optimized-for-maximum-intelligence-density-per-parameter-302764700.html

SOURCE Zyphra